New Companion AI: Bringing Alfie to Life

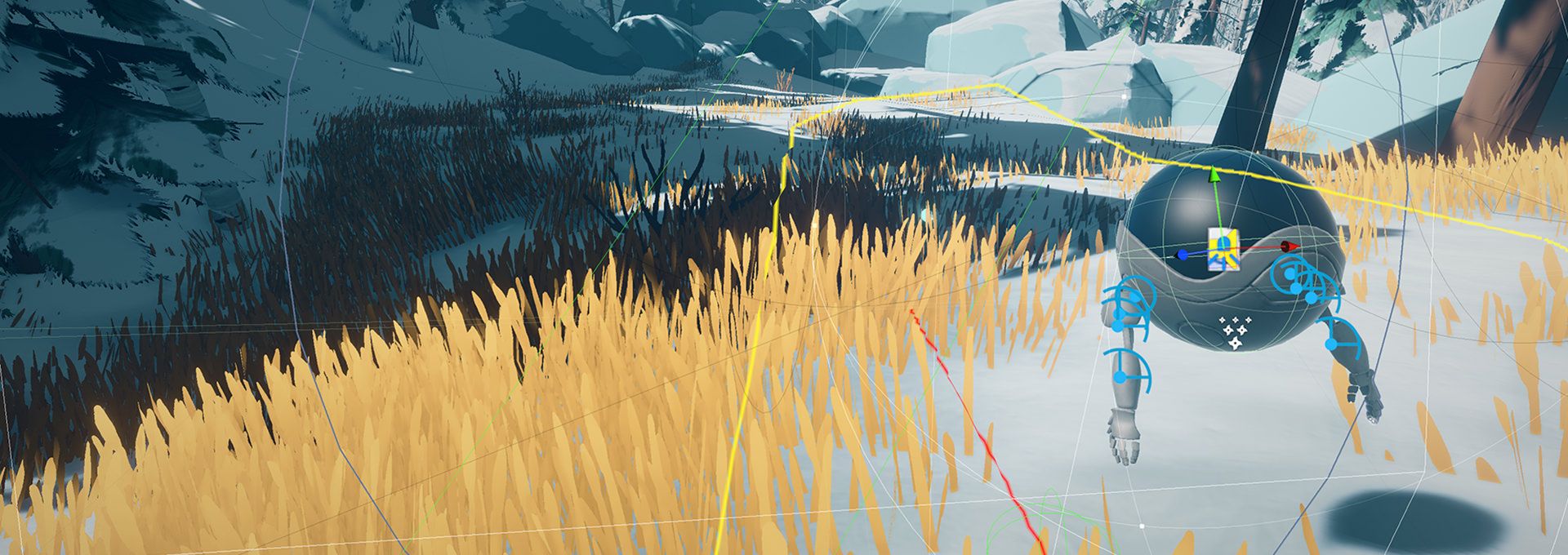

We went back to the drawing board and completely re-built Alfie's companion AI from the ground up to support physics-based movement, vastly improved navigation, interactions, proximity rules and much more.

We were thrilled to end 2022 on a bang with inclusions in Day of the Devs, AdventureX and The MIX. It was an honor to be selected for such acclaimed events, but we were even more excited to receive such valuable feedback from everyone who tried out the demo in-person and on Steam!

Thanks to that feedback, we've been working hard to fix bugs, add new features/mechanics, record more voice over and lots more. However, one thing that really stood out was the need to improve our companion AI system for Alfie.

We knew we couldn't settle for "good enough" when your therapy bot plays such a pivotal role in both the story and gameplay. So, we made the hard decision to go back to the drawing board and completely re-build the AI companion system from the ground up, taking into account all of the feedback and lessons learned from the last year of demos and play tests.

You'll get to see the improvements for yourself soon with an updated demo later this spring, but for now, here's a taste of what's new:

Physics-Based Movement

Our goal from day one was to make Alfie feel "alive" in this world, but there's always been a nagging feeling that his movement didn't "feel" quite right. We realized this was because it wasn't physically-based. Making a robot float with physics isn't the easiest thing in the world, but we knew we were onto something when it started being a blast just moving him around in test scenes!

The new system actually simulates thruster forces rather than just moving from point A to B, which gives him a real sense of weight and momentum. His subtle bobbing and rotation is now a natural side-effect of thruster balancing mechanics. He also now reacts naturally to speed, turning and obstacles, which helps to believably ground him in the world.

What's more, a physically-based system allows for real collisions with the world. This means he doesn't fly through rocks, trees or other obstacles in his way. It also means that if he's blocking Kai's path, you can simply push him out of the way. This gives us so much more creative freedom to really bring his character out in fun and surprising ways.

Navigation

One of our pillars is player agency in exploration, so we didn't want you to just be following Alfie the whole time. However, having Alfie simply try to stay in front of you caused many players to get completely lost in the forest. While fitting for the scenario, it wasn't exactly the experience we had in mind. Alfie having effectively no concept of where he is or where he's going was probably the biggest issue we saw from demo players.

What's great about the games industry is the sharing of knowledge. We happened across a GDC talk given by developers of the companion AI system in Bioshock that helped inspire our own "goal side" type of system. Basically, we know the general area where we want the player to end up, and we can calculate a path in that direction. This allows Alfie to bias his spatial positioning towards that path. This leads to a much better balance of agency to explore the world and find your own way without ending up hopelessly lost and frustrated.

Proximity Rules

Most games with exploration have a map of some sort to help the player navigate. However, since Arctic Awakening takes place in an unknown part of the wilderness where characters are completely lost and stranded, the idea of a map just didn't sit right with us. However, Alfie is always quick to remind Kai of his "proximity rule" mandated by the court. The problem is that this was only selectively enforced in the gameplay, allowing many players to get hopelessly lost with no drone in sight.

The solution was to actually build the proximity rules directly into the companion AI. Regardless of what Alfie may be doing at the time, if the player strays too far, Alfie will drop everything and come find Kai. He is then able to help lead you back to where you need to be to continue progressing in the story, where he'll pick back up what he was doing. This is never forced on you, just available as a guide when you choose to use it.

Interactions

Since Alfie isn't just there to tag along, it was important for him to have a fully functional interaction system--something that the old companion system just wasn't capable of. Alfie is now fully capable of procedurally inspecting anything, picking up and collecting objects, opening doors, aiding Kai in various ways and more. This finally allows him to be a fully autonomous character in the game, just as he is in the story.

IK & Auto Grip

In order to fully support these interactions, we needed to implement inverse kinematics (IK) and auto grip into Alfie's arms and hands. We've long had these systems in Kai to allow natural and procedural movements when interacting with objects; however, these had to be adapted for the different bone structure and physics-based movement of Alfie.

Along with animations, we can now procedurally move Alfie's arms anywhere we want to support interactions with virtually anything in the game world. Whether picking up an object, pulling the handle on a door or really any other physical action, Alfie can dynamically wrap his fingers around the contact point to further ground him in the world.

What's Next?

While there is still plenty of polish and fixes remaining, this marks the completion of probably the biggest remaining core system in the game. We're moving full steam ahead on building out the rest of the story scenes, level design locations, voice over and in general just pulling the whole experience together into a polished final release. We can't wait for all of you to get your hands on it, and we'll have more updates along the way in the coming months.

The best way to stay informed is on our Discord, Twitter and newsletter.